The full and final methodology used to create the 2010 Times Higher Education World University Rankings was unveiled this week.

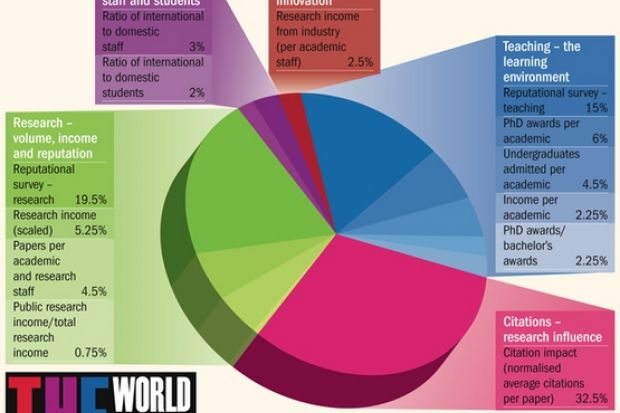

The new global rankings, which will be published on 16 September, are built on 13 separate performance indicators, compared with just six used in the old ranking system.

Times Higher Education reported last week on the headline weightings given to five broad performance categories:

• Teaching – the learning environment (30 per cent)

• Citations – research influence (32.5 per cent)

• Research – volume, income and reputation (30 per cent)

• International mix – staff and students (5 per cent)

• Industry income – innovation (2.5 per cent).

The exact weighting given to each of the 13 indicators used in each category is shown in the graphic (below).

“Our 13 indicators have been chosen and carefully weighted after detailed consultation,” said Ann Mroz, editor of THE. “We are confident that we have developed the most sophisticated and robust global rankings system ever, reflecting all three of the core missions of a university – teaching, research and knowledge transfer.”

In a significant departure from the rankings published over the previous six years, THE confirmed that it has reduced the weighting given to the results of subjective opinion polls. Some 50 per cent of the old world rankings (2004-09) were based on opinion – 40 per cent on an academic reputation survey and 10 per cent on a poll of employers.

The new system will give reputation measures a weighting of 34.5 per cent. To determine this, Ipsos MediaCT carried out a new Academic Reputation Survey, which attracted 13,388 responses from academics, representative of global scholarship. For the first time in global rankings, the survey also includes reputation measures of teaching quality. The results relating to research account for 19.5 per cent, and those relating to teaching account for 15 per cent, giving a total of 34.5 per cent.

“Even though we now have a rigorous reputation survey that stands as a serious piece of research, we still felt that the influence of opinion on the final ranking scores should not be as high as in the past,” Ms Mroz said.

“We have reduced the weight given to opinion from half under our old system to just over a third. The new tables pay more attention to evidence.”

The new rankings accord teaching greater prominence – five separate indicators that aim to capture a sense of the teaching and learning environment make up 30 per cent of the total score.

The new system also gives a much lower weighting to staff-to-student ratios (SSR) than in the past, in response to concerns that they are not strong proxies for teaching quality and can be manipulated easily.

While an SSR was previously weighted at 20 per cent, it is now worth only 4.5 per cent in total.

Waiting and weighting

“People complained that just as you cannot tell the quality of the food in a restaurant by the number of waiting staff, you cannot really get an idea of teaching quality through a staff-to-student ratio,” Ms Mroz said. “While we still believe that an SSR is a valuable indicator, we have reduced its influence on the overall score.”

For the first time, the rankings include four separate measures of income, covering income per academic as part of the broad “teaching – the learning environment” indicator, as well as research income in a number of areas.

But in response to consultation, this self-reported information will collectively be given a relatively low weighting – just 10.75 per cent in total.

In another departure from previous rankings, THE has reduced the weighting given to the proportion of international staff and international students on campus.

Under the old system, these measures accounted for 5 per cent each. Under the new system, they are worth no more than 5 per cent between them.

“We have spent 10 months listening to critics of our old rankings system, collecting and testing new data and developing this new methodology,” Ms Mroz said.

“No global rankings system will be perfect, but we believe we have created a valuable tool fit for the new, globalised era of higher education.”

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber? Login