Universities that saw some of the biggest improvements in research funding in light of the 2008 research assessment exercise actually experienced a decline in their overall research performance in the years since the previous exercise in 2001.

An analysis exclusively for Times Higher Education also shows that institutions that achieved some of the most substantial improvements in research performance were not rewarded with larger slices of the funding pie for 2009-10.

The findings, by UK data-analysis specialists Evidence Ltd, measured research performance based on the number of times universities' research publications were cited.

The findings could have implications for the future research hierarchy under the forthcoming research excellence framework (REF), which will replace the RAE with a system that will distribute research funding based on numerical indicators of quality, such as citations, instead of peer review.

Efforts unrewarded

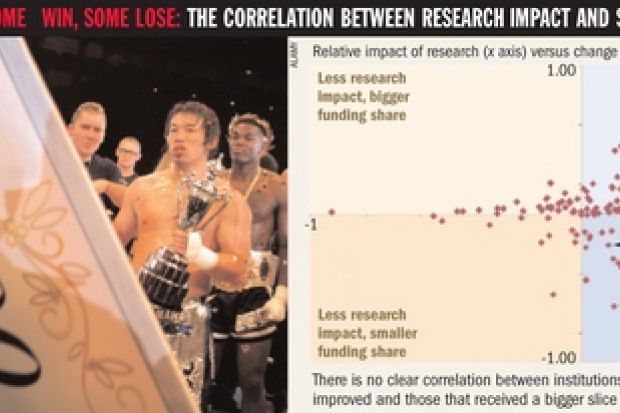

The analysis looks at how universities' shares of research funding have changed between the 2001 and the 2008 RAEs. Research funding for 2002-03 is compared with that for 2009-10.

Each institution's funding performance is compared with its research performance as measured by whether it increased or decreased its average citation levels.

All research papers published by institutions between 1998 and 2000 were compared with all those papers they published between 2005 and 2007. The figures were normalised by subject and compared with global citation baselines.

The results show no clear correlation between institutions that have seen a relative improvement in their performance as measured by citations and those that received a bigger slice of research cash.

Queen Mary, University of London, the University of Nottingham, Imperial College London and the University of Southampton all improved their indexed research performance by broadly the same amount, but only Queen Mary and Nottingham increased their share of funding substantially.

Imperial and Southampton suffered marked reductions in their funding levels.

Group analysis

The analysis separates universities into three groups: the Russell Group of large research-intensive universities; the 1994 Group of smaller research-intensive institutions; and the post-92 universities.

The results show an improvement in research performance at 14 of the 16 Russell Group universities. Only four of them, however, gained a larger share of research funding, and ten saw their share fall.

Compared with Russell Group members, a number of 1994 Group members showed a greater relative improvement in performance, but only some of them increased their share of research funding.

Finally, in the post-92 group, 14 showed a drop in overall performance but increased their share of research cash.

Jonathan Adams, director of Evidence Ltd, said discrepancies between the relative changes in performance and funding were partly the result of the different RAE strategies employed by universities.

But more importantly, they highlighted a "fundamental" shift in the logic used to assess departments between the 2001 and the 2008 RAEs.

The former gave each department a summative grade, and funded research according to that. The latter was set up to reveal and fund research excellence wherever it was found, which led to "pockets of excellence" being identified and rewarded in teaching-led institutions.

"If the methodology was flowing in the same way, you would expect to see universities that had improved by the same relative amount getting broadly similar changes in reward," Dr Adams said. "We have changed our funding logic, and as a result it has produced different outcomes.

"It doesn't necessarily mean that the 2008 RAE was wrong, nor that 2001 was right: what it says is that we are in a different world."

Dr Adams said that the higher education sector would have to reflect on the questions the analysis has raised as it moved towards a new system of deciding research funding.

"Does it mean the wrong people were being funded before, or does it mean the wrong people are being funded now? Was the money over-concentrated before, or has it been unduly dispersed now?

"That is the imponderable, and there is an enormous debate here."

Implications for the future

The results appear to have implications for the REF, which will favour citations as a factor in determining funding.

If funding equates to citations, the post-92s could see their newly won research income diminish.

Dr Adams declined to draw any conclusions about the REF. "The implications for (it) are unclear," he said. "We don't know the methodology for the REF, and we don't know how closely funding in the REF would follow the pattern here."

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber? Login